When working with databases, it's common to create a seed.sql file which contains a subset of production data for testing.

During early development, it's fine to dump the entire database and restore it on your development machine. However, once you have production users this becomes a security issue - do you really want to dump your users' data onto your local machines?

There are many ways to solve this, but recently I stumbled upon a neat way to do it using PostgreSQL's Row Level Security (RLS).

The concept is simple:

- Create a database user with restricted access.

- Define some RLS rules for that user, limiting what data they can access.

- Run

pg_dumpas that user.

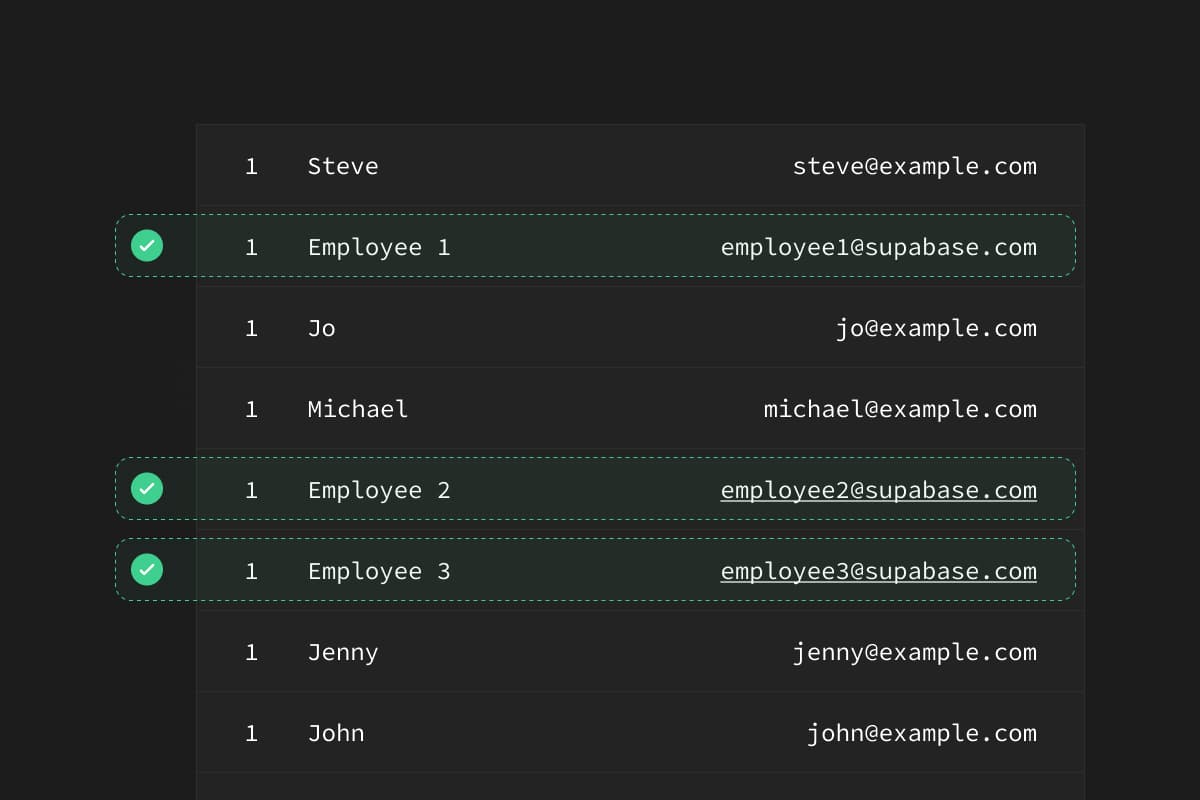

For this scenario, let's imagine that you have a table called profiles in your database:

id | name | email |

|---|---|---|

1 | Employee 1 | employee1@supabase.com |

2 | Employee 2 | employee2@supabase.com |

3 | Employee 3 | employee3@supabase.com |

4 | Jenny | jenny@example.com |

5 | Joe | joe@example.com |

In this case, if we ran a pg_dump we will save Jenny and Joe's personal data. We don't want that, so let's create a Postgres user called exporter, who can only dump the data we want.

Step 1: Prepare a user#

Create a user to connect to the database. We'll call them exporter and grant them access to the public schema:

Step 2: Create data access rules#

Let's turn on RLS for this table and limit the data which exporter can access:

Step 3: Export the data#

Now we can use pg_dump to get only the data that we need.

Run the dump with the exporter user that we created above and use the --enable-row-security flag to ensure that the dump succeeds.

And that's it. You can follow this same pattern for any tables that you want to dump.

Data access patterns#

RLS is a bit like appending a “where” clause to a select, so you can create all sorts of data access patterns. Let's see a few more which are useful for extracting seed data.

Using email rules#

Instead of using hardcoded numbers in our RLS policies, we could use email extensions to determine the users who we want to export:

Only recent data#

If we have a table with a lot of data, like an analytics table, we might only care about the last 2 months of data.

Using flags#

If you don't mind having some additional columns in you database, you can add flags to each row to determine whether it's safe to export.

Conclusion#

Using seed data isn't the only way to run development environments. It's also possible to run fully-masked copies of your database using tools like

Snaplet.

We're also bullish on copy-on-write strategies which allow users to "fork" a database at a point in time, a strategy used by Database Lab Engine. DLE uses the ZFS file system to achieve this, but it's within reach of the Postgres core once alternative storage strategies become easier to implement.

If you want to try out the steps we described in this article, fire up a full PostgreSQL database: database.new